Research Overview

My research develops interpretable, computationally scalable machine-learning methods for astronomical survey discovery, with a particular focus on time-domain astrophysics, extragalactic transients, and survey systematics. The central goal of my work is to identify scientifically valuable objects and phenomena that are systematically missed, misclassified, or discarded by traditional pipelines. I work at the intersection of deep learning, active anomaly detection, and large-scale survey infrastructure, with applications to major surveys including ZTF, Euclid, JWST, and the Rubin Observatory.

Previous and Ongoing Research

Deep learning for astronomical image reconstruction.

A major strand of my work addresses the limitations imposed by source blending, PSF variability, and atmospheric seeing in optical and near-infrared surveys. I have developed deep-learning–based deblending and deconvolution methods that significantly outperform traditional tools while remaining efficient enough for survey deployment. This includes residual dense neural networks for complex source deblending and lightweight, PSF-aware autoencoder architectures for image reconstruction, enabling more accurate recovery of galaxy fluxes, shapes, and colours in crowded and low-signal regimes.

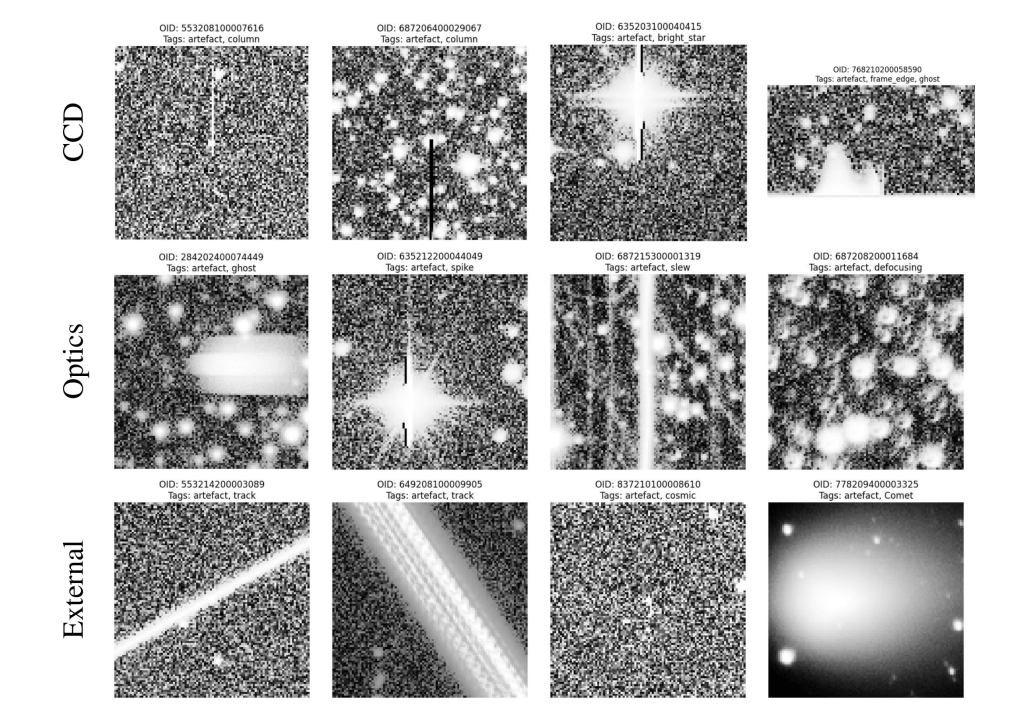

Artefact-driven discovery in time-domain surveys.

Rather than treating survey artefacts purely as contaminants, my work reframes them as opportunities for discovery. Within the SNAD collaboration, I led the creation of the first large, expert-labelled public catalogue of ZTF artefacts using active anomaly detection. This effort not only produced a valuable benchmarking dataset, but also revealed unexpected astrophysical systems, including previously uncatalogued variables. Most notably, I demonstrated that artefacts caused by saturated stars encode reliable variability information, opening a new pathway to recover long-term light curves for bright sources that are typically excluded from time-domain analyses.

Active anomaly detection pipelines

My current work focuses on developing and comparing active anomaly-detection frameworks for survey-scale data. I am leading a large-scale comparison of PineForest and Astronomaly on hundreds of thousands of ZTF light curves, and have designed a hybrid pipeline that combines their strengths. This approach rapidly converges on rare and unusual objects while remaining interpretable and user-guided, making it well suited for real-time alert streams such as Rubin/LSST. Early applications have already identified new variable sources and targets for spectroscopic follow-up.

Current and Future Research

Looking ahead, I am extending active anomaly detection from the time domain to the image domain, with a particular emphasis on Euclid imaging data. Using representation learning and hybrid anomaly-detection workflows, my goal is to identify objects that lie outside the training distributions of standard selection algorithms, including extreme-redshift galaxies, strong-lensing outliers, and other rare or novel systems. Promising candidates will be rapidly shared with survey science teams and followed up spectroscopically through established collaborations.

Across all projects, my long-term vision is to treat artefacts, saturation, and pipeline failures as discovery spaces rather than nuisances, and to build reproducible, interpretable machine-learning infrastructure that enables scientific insight at survey scale.

You must be logged in to post a comment.